Kubernetes in the wild report 2023

Dynatrace

JANUARY 16, 2023

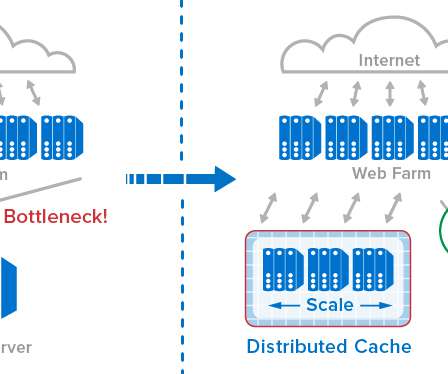

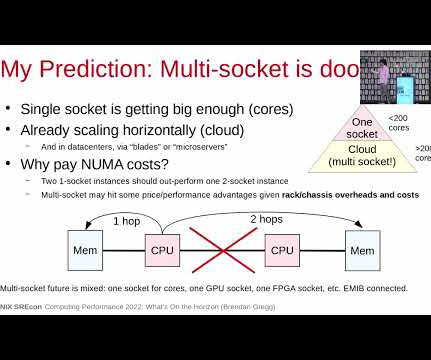

On-premises data centers invest in higher capacity servers since they provide more flexibility in the long run, while the procurement price of hardware is only one of many cost factors. Of the organizations in the Kubernetes survey, 71% run databases and caches in Kubernetes, representing a +48% year-over-year increase.

Let's personalize your content