Crucial Redis Monitoring Metrics You Must Watch

Scalegrid

JANUARY 25, 2024

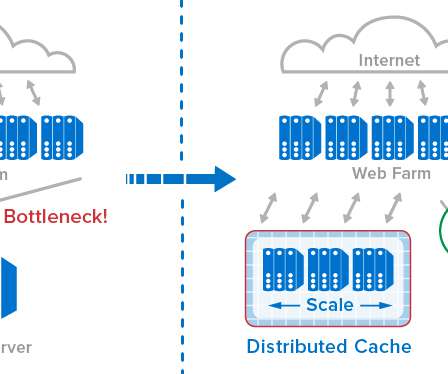

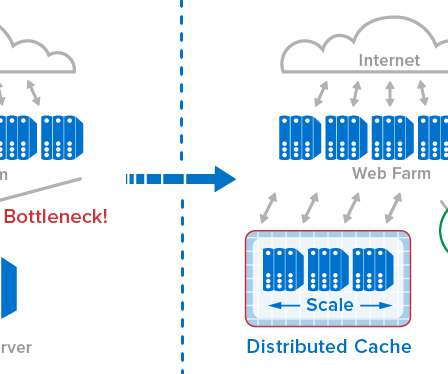

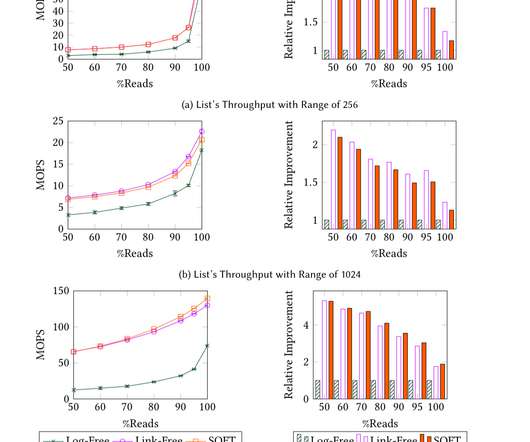

Effective management of memory stores with policies like LRU/LFU proactive monitoring of the replication process and advanced metrics such as cache hit ratio and persistence indicators are crucial for ensuring data integrity and optimizing Redis’s performance. Cache Hit Ratio The cache hit ratio represents the efficiency of cache usage.

Let's personalize your content