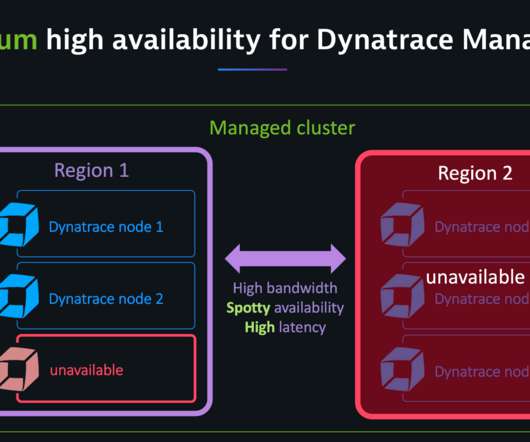

Dynatrace Managed turnkey Premium High Availability for globally distributed data centers (Early Adopter)

Dynatrace

JUNE 25, 2020

For example, in a three-node cluster, one node can go down; in a cluster with five or more nodes, two nodes can go down. The network latency between cluster nodes should be around 10 ms or less. Our Premium High Availability comes with the following features: Active-active deployment model for optimum hardware utilization.

Let's personalize your content