How RevenueCat Manages Caching for Handling over 1.2 Billion Daily API Requests

InfoQ

JANUARY 29, 2024

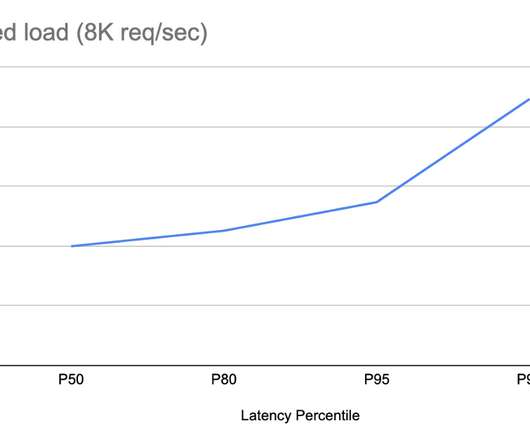

RevenueCat extensively uses caching to improve the availability and performance of its product API while ensuring consistency. The company shared its techniques to deliver the platform, which can handle over 1.2 billion daily API requests. By Rafal Gancarz

Let's personalize your content