Architectural Insights: Designing Efficient Multi-Layered Caching With Instagram Example

DZone

FEBRUARY 27, 2024

Leveraging this hierarchical structure can significantly reduce latency and improve overall performance.

This site uses cookies to improve your experience. By viewing our content, you are accepting the use of cookies. To help us insure we adhere to various privacy regulations, please select your country/region of residence. If you do not select a country we will assume you are from the United States. View our privacy policy and terms of use.

DZone

FEBRUARY 27, 2024

Leveraging this hierarchical structure can significantly reduce latency and improve overall performance.

The Netflix TechBlog

SEPTEMBER 29, 2022

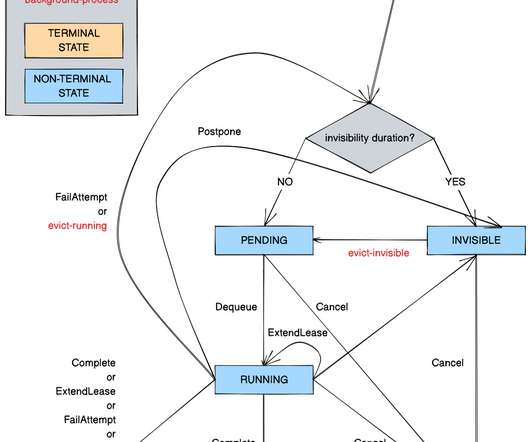

Timestone: Netflix’s High-Throughput, Low-Latency Priority Queueing System with Built-in Support for Non-Parallelizable Workloads by Kostas Christidis Introduction Timestone is a high-throughput, low-latency priority queueing system we built in-house to support the needs of Cosmos , our media encoding platform. Over the past 2.5

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

IO River

NOVEMBER 2, 2023

A content delivery network (CDN) is a distributed network of servers strategically located across multiple geographical locations to deliver web content to end users more efficiently. What is CDN Architecture?CDN CDN architecture serves as a blueprint or plan that guides the distribution of CDN provider PoPs.

Scalegrid

MARCH 28, 2024

Key Takeaways Redis offers complex data structures and additional features for versatile data handling, while Memcached excels in simplicity with a fast, multi-threaded architecture for basic caching needs. Redis Data Types and Structures The design of Redis’s data structures emphasizes versatility.

IO River

NOVEMBER 2, 2023

â€A content delivery network (CDN) is a distributed network of servers strategically located across multiple geographical locations to deliver web content to end users more efficiently. â€What is CDN Architecture? â€What is CDN Architecture?â€CDN All these elements combined serve as the blueprint of a CDN architecture.Â

Dynatrace

JANUARY 31, 2024

Retrieval-augmented generation emerges as the standard architecture for LLM-based applications Given that LLMs can generate factually incorrect or nonsensical responses, retrieval-augmented generation (RAG) has emerged as an industry standard for building GenAI applications.

The Netflix TechBlog

NOVEMBER 17, 2022

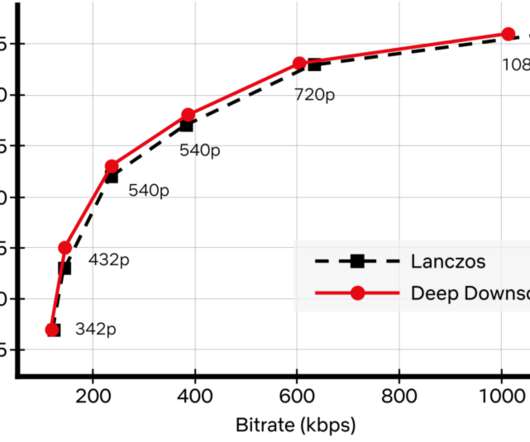

While conventional video codecs remain prevalent, NN-based video encoding tools are flourishing and closing the performance gap in terms of compression efficiency. We employed an adaptive network design that is applicable to the wide variety of resolutions we use for encoding. How do we apply neural networks at scale efficiently?

Adrian Cockcroft

JANUARY 20, 2023

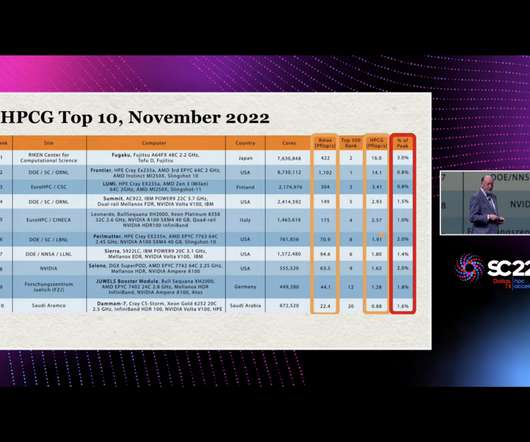

Here’s some predictions I’m making: Jack Dongarra’s efforts to highlight the low efficiency of the HPCG benchmark as an issue will influence the next generation of supercomputer architectures to optimize for sparse matrix computations. Next generation architectures will use CXL3.0

Scalegrid

MARCH 14, 2024

The architecture usually integrates several private, public, and on-premises infrastructures. In practice, a hybrid cloud operates by melding resources and services from multiple computing environments, which necessitates effective coordination, orchestration, and integration to work efficiently.

Scalegrid

FEBRUARY 8, 2024

Their design emphasizes increasing availability by spreading out files among different nodes or servers — this approach significantly reduces risks associated with losing or corrupting data due to node failure. Variations within these storage systems are called distributed file systems.

Dynatrace

SEPTEMBER 13, 2023

This is a set of best practices and guidelines that help you design and operate reliable, secure, efficient, cost-effective, and sustainable systems in the cloud. The framework comprises six pillars: Operational Excellence, Security, Reliability, Performance Efficiency, Cost Optimization, and Sustainability.

Scalegrid

JANUARY 8, 2024

This article delves into the specifics of how AI optimizes cloud efficiency, ensures scalability, and reinforces security, providing a glimpse at its transformative role without giving away extensive details. Using AI for Enhanced Cloud Operations The integration of AI in cloud computing is enhancing operational efficiency in several ways.

Dynatrace

OCTOBER 4, 2022

While data lakes and data warehousing architectures are commonly used modes for storing and analyzing data, a data lakehouse is an efficient third way to store and analyze data that unifies the two architectures while preserving the benefits of both. Data lakehouses deliver the query response with minimal latency.

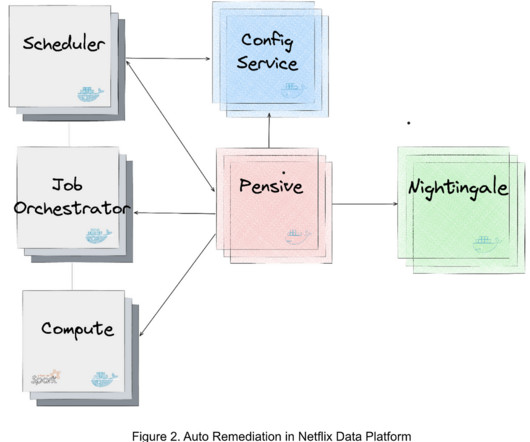

The Netflix TechBlog

MARCH 4, 2024

We have deployed Auto Remediation in production for handling memory configuration errors and unclassified errors of Spark jobs and observed its efficiency and effectiveness (e.g., For efficient error handling, Netflix developed an error classification service, called Pensive, which leverages a rule-based classifier for error classification.

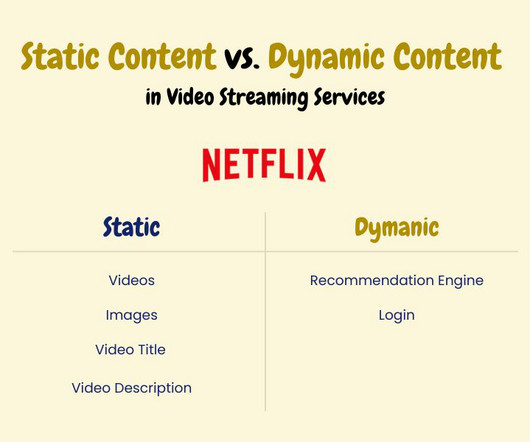

IO River

NOVEMBER 2, 2023

They need to deliver impeccable performance without breaking the bank.According to recent industry statistics, global streaming has seen an uptick of 30% in the past year, underscoring the importance of efficient CDN architecture strategies. This is where a well-architected Content Delivery Network (CDN) shines.

IO River

NOVEMBER 2, 2023

They need to deliver impeccable performance without breaking the bank.According to recent industry statistics, global streaming has seen an uptick of 30% in the past year, underscoring the importance of efficient CDN architecture strategies. This is where a well-architected Content Delivery Network (CDN) shines.

Scalegrid

MARCH 22, 2024

They can also bolster uptime and limit latency issues or potential downtimes. Choosing the Right Cloud Services Choosing the right cloud services is crucial in developing an efficient multi cloud strategy. They simplify how you orchestrate everything across the diverse ecosystem of cloud services.

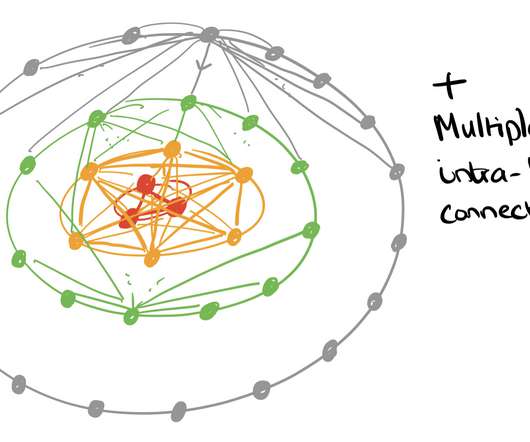

ACM Sigarch

MAY 31, 2023

Introduction Memory systems are evolving into heterogeneous and composable architectures. Heterogeneous and Composable Memory (HCM) offers a feasible solution for terabyte- or petabyte-scale systems, addressing the performance and efficiency demands of emerging big-data applications. The recently announced CXL3.0

Percona

MARCH 20, 2023

When designing an architecture, many components need to be considered before deciding on the best solution. In this scenario, it is also crucial to be efficient in resource utilization and scaling with frugality. Let us take a look also the latency: Here the situation starts to be a little bit more complicated.

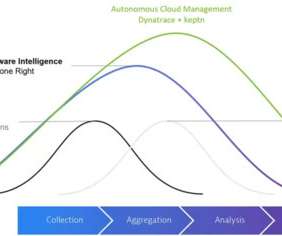

Dynatrace

OCTOBER 1, 2021

As dynamic systems architectures increase in complexity and scale, IT teams face mounting pressure to track and respond to conditions and issues across their multi-cloud environments. An advanced observability solution can also be used to automate more processes, increasing efficiency and innovation among Ops and Apps teams.

The Netflix TechBlog

SEPTEMBER 8, 2020

We tried a few iterations of what this new service should look like, and eventually settled on a modern architecture that aimed to give more control of the API experience to the client teams. For us, it means that we now need to have ~15 MDN tabs open when writing routes :) Let’s briefly discuss the architecture of this microservice.

Dynatrace

DECEMBER 15, 2022

ITOps refers to the process of acquiring, designing, deploying, configuring, and maintaining equipment and services that support an organization’s desired business outcomes. This includes response time, accuracy, speed, throughput, uptime, CPU utilization, and latency. Performance. What does IT operations do?

Percona

APRIL 17, 2023

The swap issue is explained in the excellent article by Jeremy Cole at the Swap Insanity and NUMA Architecture. The CFQ works well for many general use cases but lacks latency guarantees. The deadline excels at latency-sensitive use cases ( like databases ), and noop is closer to no schedule at all.

Dynatrace

APRIL 5, 2021

The 2014 launch of AWS Lambda marked a milestone in how organizations use cloud services to deliver their applications more efficiently, by running functions at the edge of the cloud without the cost and operational overhead of on-premises servers. AWS continues to improve how it handles latency issues. Dynatrace news.

The Morning Paper

NOVEMBER 10, 2019

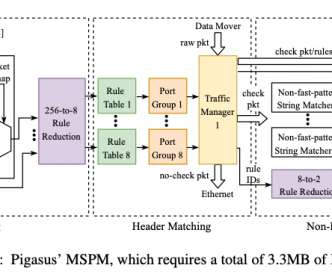

It’s been clear for a while that software designed explicitly for the data center environment will increasingly want/need to make different design trade-offs to e.g. general-purpose systems software that you might install on your own machines. The desire for CPU efficiency and lower latencies is easy to understand.

O'Reilly Software

DECEMBER 11, 2017

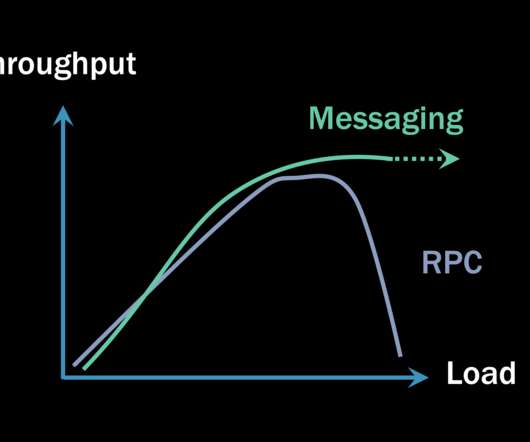

A message-based microservices architecture offers many advantages, making solutions easier to scale and expand with new services. The asynchronous nature of interservice interactions inherent to this architecture, however, poses challenges for user-initiated actions such as create-read-update-delete (CRUD) requests on an object.

The Morning Paper

OCTOBER 26, 2020

On the surface this is a paper about fast data ingestion from high-volume streams, with indexing to support efficient querying. Helios also serves as a reference architecture for how Microsoft envisions its next generation of distributed big-data processing systems being built. Why do we need a new reference architecture?

The Netflix TechBlog

JUNE 4, 2019

Because microprocessors are so fast, computer architecture design has evolved towards adding various levels of caching between compute units and the main memory, in order to hide the latency of bringing the bits to the brains. This avoids thrashing caches too much for B and evens out the pressure on the L3 caches of the machine.

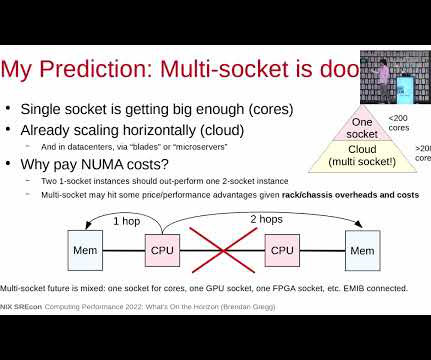

Brendan Gregg

FEBRUARY 28, 2023

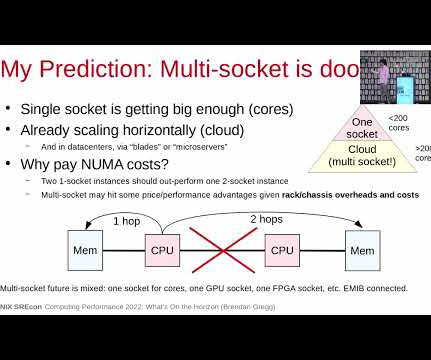

My personal opinion is that I don't see a widespread need for more capacity given horizontal scaling and servers that can already exceed 1 Tbyte of DRAM; bandwidth is also helpful, but I'd be concerned about the increased latency for adding a hop to more memory. Ford, et al., “TCP

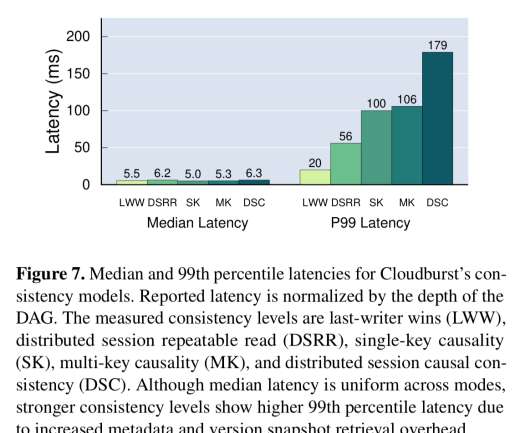

The Morning Paper

FEBRUARY 6, 2020

’ Stateless is fine until you need state, at which point the coarse-grained solutions offered by current platforms limit the kinds of application designs that work well. On the Cloudburst design teams’ wish list: A running function’s ‘hot’ data should be kept physically nearby for low-latency access.

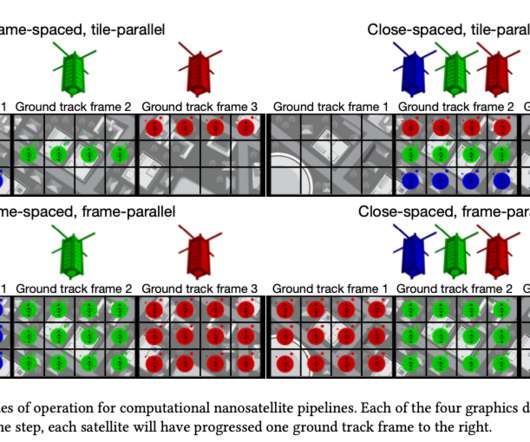

The Morning Paper

OCTOBER 11, 2020

Only space system architects don’t call it request-response, they call it a ‘ bent-pipe architecture.’. In the bent pipe architecture a satellite gathers and stores data until it is near a ground station, and then transmits whatever it has. Orbital Edge Computing (OEC) is designed to do just that. Satellites are changing!

The Morning Paper

MAY 19, 2019

Last week we learned about the [increased tail-latency sensitivity of microservices based applications with high RPC fan-outs. Seer uses estimates of queue depths to mitigate latency spikes on the order of 10-100ms, in conjunction with a cluster manager. So what we have here is a glimpse of the limits for low-latency RPCs under load.

The Morning Paper

NOVEMBER 15, 2020

Improving the efficiency with which we can coordinate work across a collection of units (see the Universal Scalability Law ). With more nodes and more coordination comes more complexity, both in design and operation. This makes the whole system latency sensitive. Increasing the amount of work we can do on a single unit.

The Morning Paper

JUNE 23, 2019

Why are developers using RInK systems as part of their design? Keeping application services stateless is a design guideline that achieved widespread adoption following the publication of the 12-factor app manifesto. The network latency of fetching data over the network, even considering fast data center networks. Who knew! ;).

Particular Software

SEPTEMBER 20, 2021

Some will claim that any type of RPC communication ends up being faster (meaning it has lower latency) than any equivalent invocation using asynchronous messaging. There are more steps, so the increased latency is easily explained. Garbage collectors are designed under the assumption that memory should be cleaned up reasonably quickly.

Dynatrace

SEPTEMBER 30, 2021

Like any move, a cloud migration requires a lot of planning and preparation, but it also has the potential to transform the scope, scale, and efficiency of how you deliver value to your customers. This can fundamentally transform how they work, make processes more efficient, and improve the overall customer experience. Here are three.

Brendan Gregg

FEBRUARY 28, 2023

My personal opinion is that I don't see a widespread need for more capacity given horizontal scaling and servers that can already exceed 1 Tbyte of DRAM; bandwidth is also helpful, but I'd be concerned about the increased latency for adding a hop to more memory. Ford, et al., “TCP

The Morning Paper

SEPTEMBER 10, 2019

When each of those use cases is powered by a dedicated back-end, investments in better performance, improved scalability and efficiency etc. That’s hard for many reasons, including the differing trade-offs between throughput and latency that need to be made across the use cases. are divided. Reporting and dashboarding use cases (e.g.

Smashing Magazine

SEPTEMBER 28, 2022

On design systems, UX, web performance and CSS/JS. Server caches help lower the latency between a Frontend and Backend; since key-value databases are faster than traditional relational SQL databases, it will significantly increase an API’s response time. Common Websocket Architecture. Event Sourcing Architecture.

The Morning Paper

JUNE 13, 2019

Making queries to an inference engine has many of the same throughput, latency, and cost considerations as making queries to a datastore, and more and more applications are coming to depend on such queries. The following figure highlights how just one of these variables, batch size, impacts throughput and latency on ResNet50.

ACM Sigarch

DECEMBER 6, 2018

Each of these categories opens up challenging problems in AI/visual algorithms, high-density computing, bandwidth/latency, distributed systems. To foster research in these categories, we provide an overview of each of these categories to understand the implications on workload analysis and HW/SW architecture research.

Percona

JANUARY 24, 2023

Individual processes generate WAL records, and latency is very crucial for transactions. Summary Some of the key points/takeaways I have from the discussion in the community and as well as in my simple tests: The compression method pglz available in the older version was not very efficient.

The Morning Paper

OCTOBER 10, 2019

As image recognition architectures are evolving quickly, we want to iterate our three specialized embeddings with modern architectures to improve our three visual search products. Model architecture. Building blocks: classification and multi-task learning. either 1 or 0) that collapses the range into two poles. A/B testing.

Smashing Magazine

AUGUST 3, 2023

These pages serve as a pivotal tool in our digital marketing strategy, not only providing valuable information about our services but also designed to be easily discoverable through search engines. This is why the async and deferred attributes are crucial, as they ensure an efficient, seamless web browsing experience.

Expert insights. Personalized for you.

We have resent the email to

Are you sure you want to cancel your subscriptions?

Let's personalize your content