Update of our SSO services incident

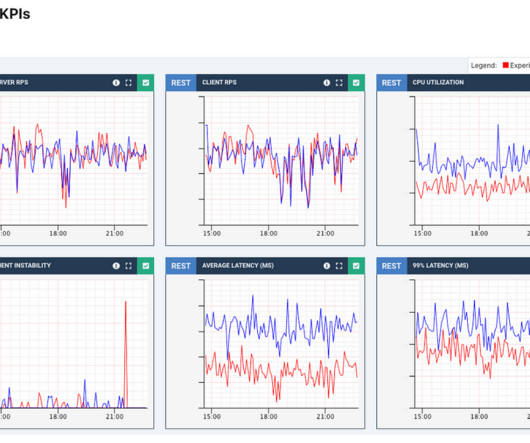

Dynatrace

JANUARY 23, 2023

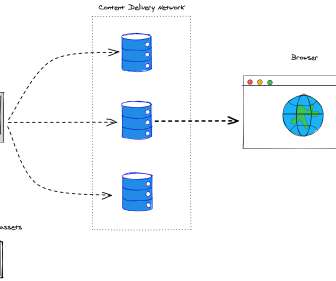

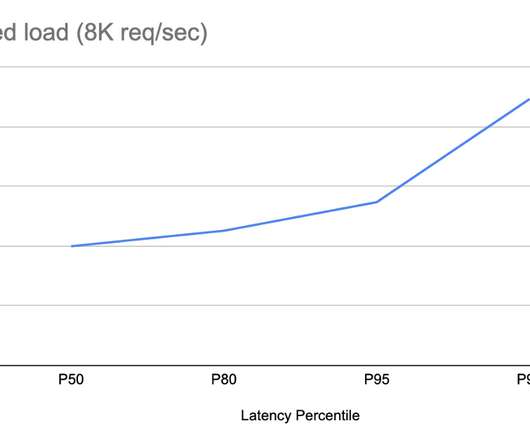

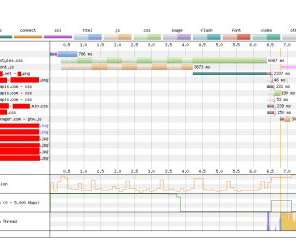

These include improving API traffic management and caching mechanisms to reduce server and network load, optimizing database queries, and adding additional compute resources, just to name some. While some of these are already done, such as adding additional compute, others require more development and testing. Hopefully never.)

Let's personalize your content