The Three Cs: Concatenate, Compress, Cache

CSS Wizardry

OCTOBER 16, 2023

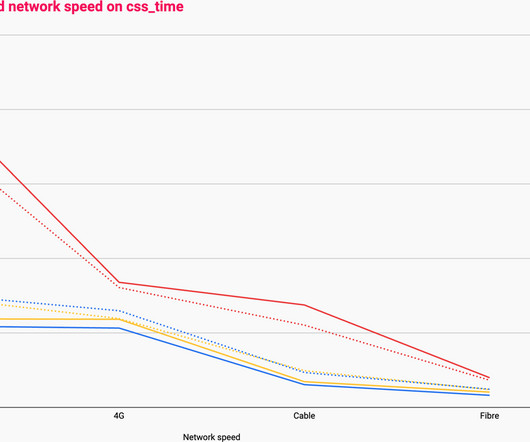

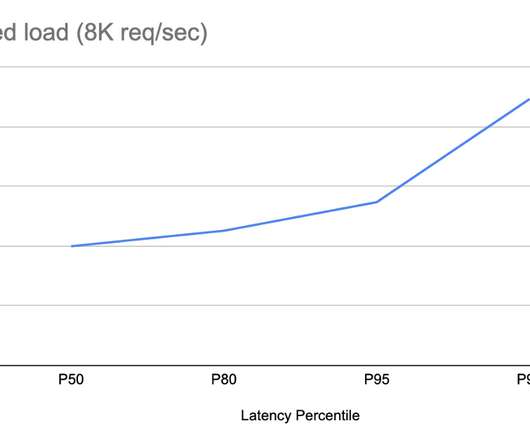

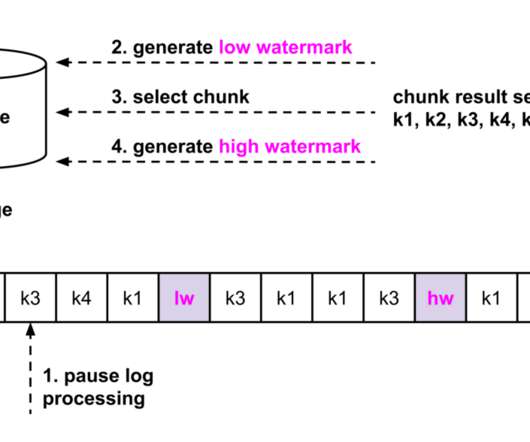

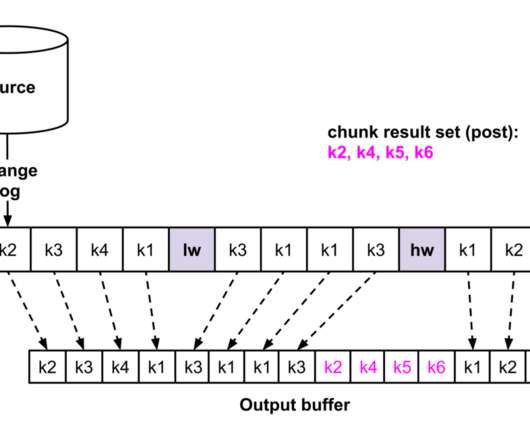

Caching them at the other end: How long should we cache files on a user’s device? Given that 66% of all websites (and 77% of all requests ) are running HTTP/2, I will not discuss concatenation strategies for HTTP/1.1 4,362ms of cumulative latency; 240ms of cumulative download. in this article. That’s almost 22× more!

Let's personalize your content