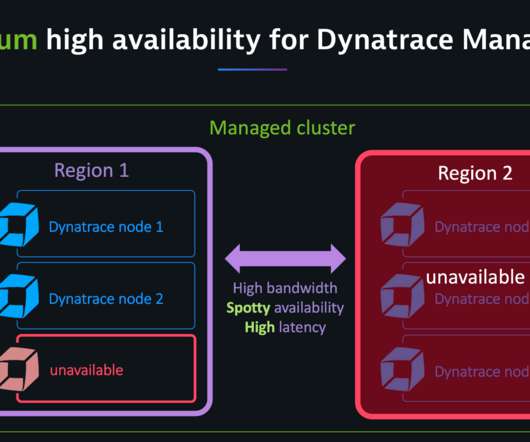

Dynatrace Managed turnkey Premium High Availability for globally distributed data centers (Early Adopter)

Dynatrace

JUNE 25, 2020

Dynatrace Managed is intrinsically highly available as it stores three copies of all events, user sessions, and metrics across its cluster nodes. For example, in a three-node cluster, one node can go down; in a cluster with five or more nodes, two nodes can go down. Turnkey high availability across globally distributed data centers.

Let's personalize your content