Transforming Business Outcomes Through Strategic NoSQL Database Selection

DZone

NOVEMBER 25, 2023

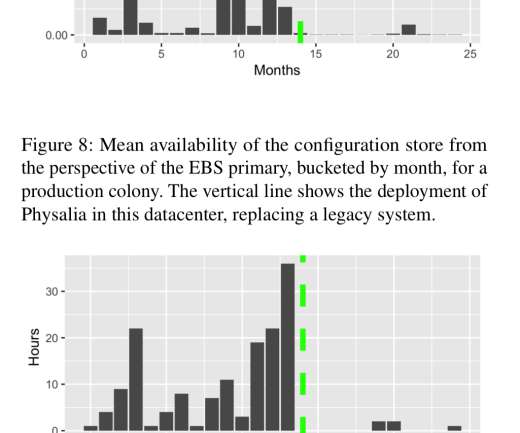

We often dwell on the technical aspects of database selection, focusing on performance metrics , storage capacity, and querying capabilities. Factors like read and write speed, latency, and data distribution methods are essential. In a detailed article, we've discussed how to align a NoSQL database with specific business needs.

Let's personalize your content