Optimize your environment: Unveiling Dynatrace Hyper-V extension for enhanced performance and efficient troubleshooting

Dynatrace

OCTOBER 23, 2023

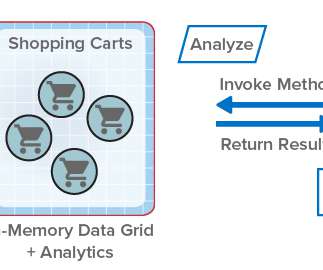

This leads to a more efficient and streamlined experience for users. Lastly, monitoring and maintaining system health within a virtual environment, which includes efficient troubleshooting and issue resolution, can pose a significant challenge for IT teams.

Let's personalize your content