Business Flow: Why IT operations teams should monitor business processes

Dynatrace

MARCH 12, 2024

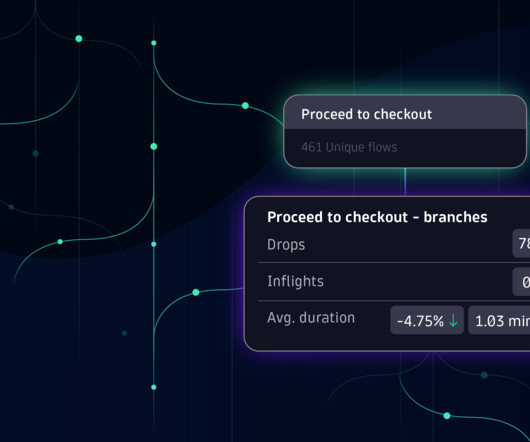

The business process observability challenge Increasingly dynamic business conditions demand business agility; reacting to a supply chain disruption and optimizing order fulfillment are simple but illustrative examples. Most business processes are not monitored. First and foremost, it’s a data problem.

Let's personalize your content