The Power of Caching: Boosting API Performance and Scalability

DZone

AUGUST 16, 2023

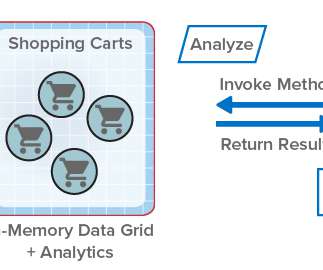

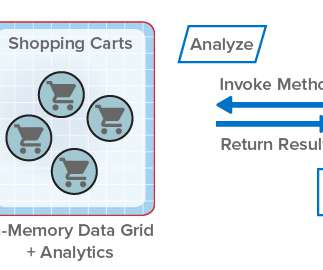

Benefits of Caching Improved performance: Caching eliminates the need to retrieve data from the original source every time, resulting in faster response times and reduced latency. Reduced server load: By serving cached content, the load on the server is reduced, allowing it to handle more requests and improving overall scalability.

Let's personalize your content