Uber’s Big Data Platform: 100+ Petabytes with Minute Latency

Uber Engineering

OCTOBER 17, 2018

Uber is committed to delivering safer and more reliable transportation across our global markets.

Uber Engineering

OCTOBER 17, 2018

Uber is committed to delivering safer and more reliable transportation across our global markets.

The Netflix TechBlog

SEPTEMBER 2, 2020

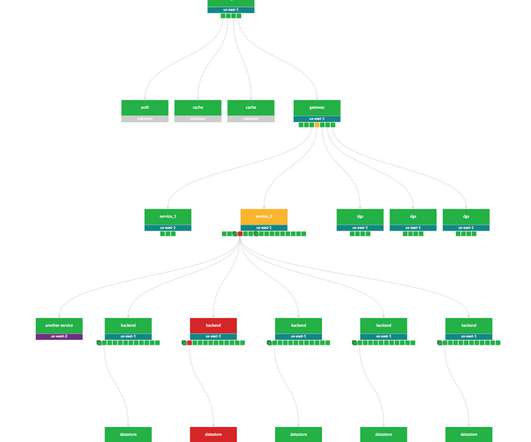

While this abundance of dashboards and information is by no means unique to Netflix, it certainly holds true within our microservices architecture. Distributed tracing is the process of generating, transporting, storing, and retrieving traces in a distributed system. As you can imagine, this comes with very real storage costs.

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

Alex Russell

OCTOBER 22, 2017

One distinct trend is a belief that a JavaScript framework and Single-Page Architecture (SPA) is a must for PWA development. Contended, over-subscribed cells can make “fast” networks brutally slow, transport variance can make TCP much less efficient , and the bursty nature of web traffic works against us.

The Netflix TechBlog

MAY 5, 2021

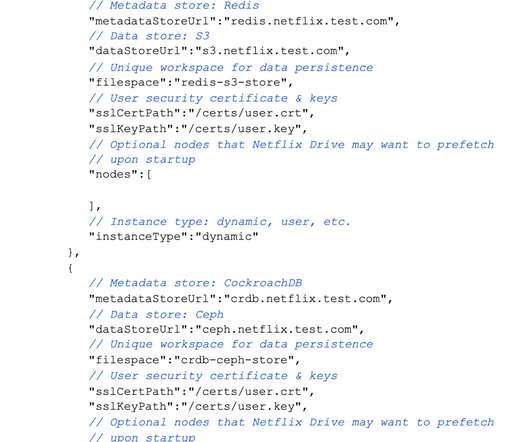

In the future posts, we will do an architectural deep dive into the several components of Netflix Drive. Netflix Drive relies on a data store that will be the persistent storage layer for assets, and a metadata store which will provide a relevant mapping from the file system hierarchy to the data store entities.

Smashing Magazine

JANUARY 11, 2021

Defining The Environment Choosing a framework, baseline performance cost, Webpack, dependencies, CDN, front-end architecture, CSR, SSR, CSR + SSR, static rendering, prerendering, PRPL pattern. Estimated Input Latency tells us if we are hitting that threshold, and ideally, it should be below 50ms. Large preview ).

Smashing Magazine

JANUARY 6, 2020

Estimated Input Latency tells us if we are hitting that threshold, and ideally, it should be below 50ms. Designed for the modern web, it responds to actual congestion, rather than packet loss like TCP does, it is significantly faster , with higher throughput and lower latency — and the algorithm works differently.

Smashing Magazine

JANUARY 7, 2019

Estimated Input Latency tells us if we are hitting that threshold, and ideally, it should be below 50ms. Keeping progressive enhancement as the guiding principle of your front-end architecture and deployment is a safe bet. Consider using PRPL pattern and app shell architecture. Use progressive enhancement as a default.

Let's personalize your content