Evolving from Rule-based Classifier: Machine Learning Powered Auto Remediation in Netflix Data…

The Netflix TechBlog

MARCH 4, 2024

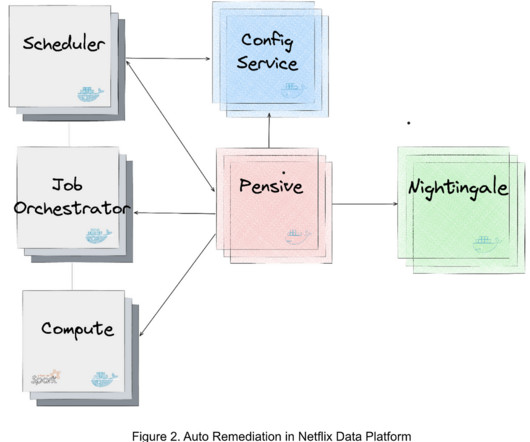

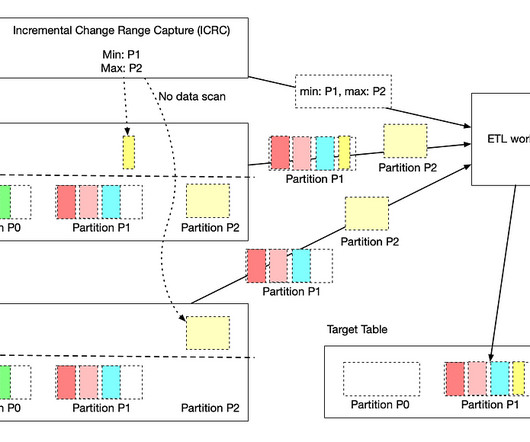

Operational automation–including but not limited to, auto diagnosis, auto remediation, auto configuration, auto tuning, auto scaling, auto debugging, and auto testing–is key to the success of modern data platforms. Upon further profiling, we found that most of the latency came from the candidate generated step (i.e.,

Let's personalize your content