Dynatrace supports SnapStart for Lambda as an AWS launch partner

Dynatrace

NOVEMBER 28, 2022

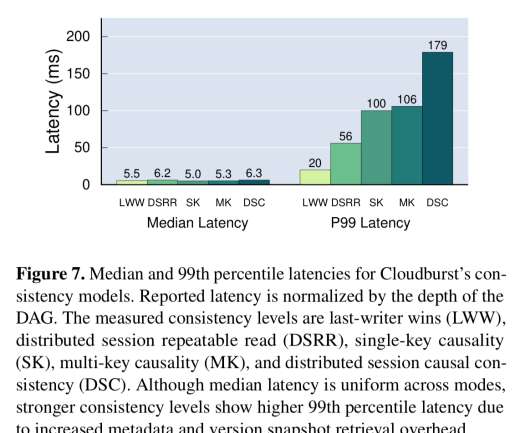

Dynatrace is proud to be an AWS launch partner in support of Amazon Lambda SnapStart. The new Amazon capability enables customers to improve the startup latency of their functions from several seconds to as low as sub-second (up to 10 times faster) at P99 (the 99th latency percentile). What is Lambda?

Let's personalize your content