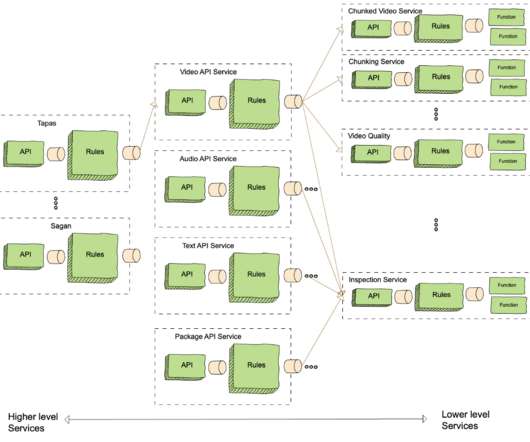

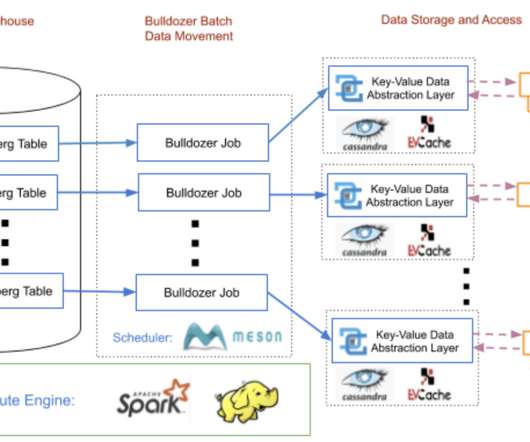

Rebuilding Netflix Video Processing Pipeline with Microservices

The Netflix TechBlog

JANUARY 10, 2024

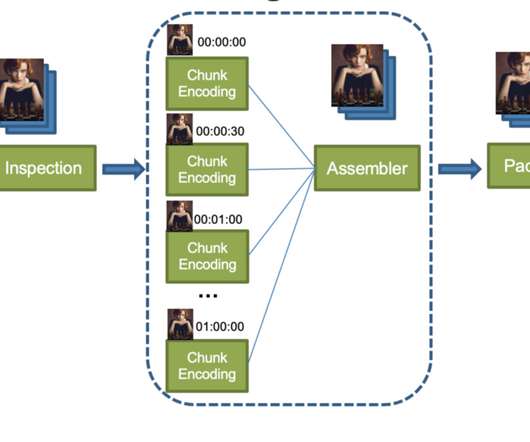

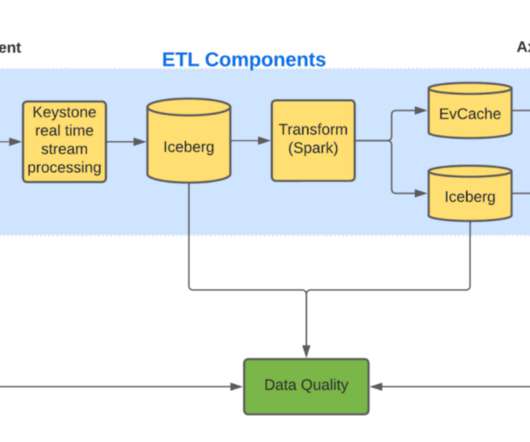

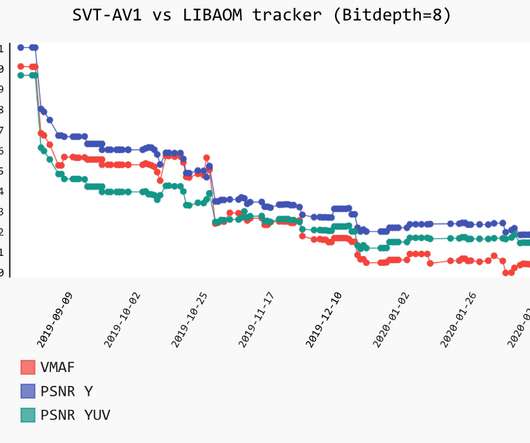

Future blogs will provide deeper dives into each service, sharing insights and lessons learned from this process. The Netflix video processing pipeline went live with the launch of our streaming service in 2007. The Netflix video processing pipeline went live with the launch of our streaming service in 2007.

Let's personalize your content