Architectural Insights: Designing Efficient Multi-Layered Caching With Instagram Example

DZone

FEBRUARY 27, 2024

Leveraging this hierarchical structure can significantly reduce latency and improve overall performance.

This site uses cookies to improve your experience. By viewing our content, you are accepting the use of cookies. To help us insure we adhere to various privacy regulations, please select your country/region of residence. If you do not select a country we will assume you are from the United States. View our privacy policy and terms of use.

DZone

FEBRUARY 27, 2024

Leveraging this hierarchical structure can significantly reduce latency and improve overall performance.

DZone

JUNE 22, 2023

It involves a combination of techniques and best practices aimed at reducing latency, improving user experience, and increasing the overall efficiency of the system. API performance optimization is the process of improving the speed, scalability, and reliability of APIs.

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.

The Netflix TechBlog

SEPTEMBER 29, 2022

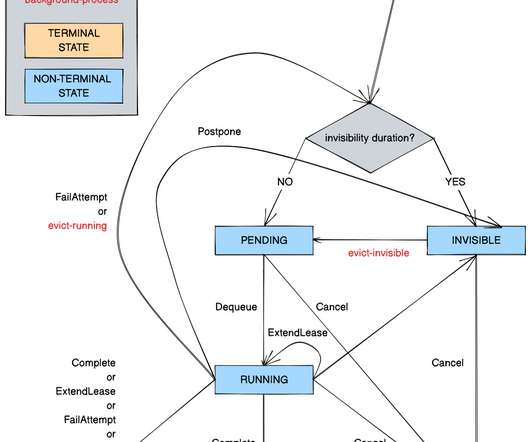

Timestone: Netflix’s High-Throughput, Low-Latency Priority Queueing System with Built-in Support for Non-Parallelizable Workloads by Kostas Christidis Introduction Timestone is a high-throughput, low-latency priority queueing system we built in-house to support the needs of Cosmos , our media encoding platform. Over the past 2.5

The Netflix TechBlog

SEPTEMBER 3, 2021

Remote calls are never free; they impose extra latency, increase probability of an error, and consume network bandwidth. How can we achieve a similar functionality when designing our gRPC APIs? This (alongside some other techniques like ZigZag encoding for signed types) makes protobuf messages space-efficient.

Scalegrid

JANUARY 25, 2024

Key Takeaways Critical performance indicators such as latency, CPU usage, memory utilization, hit rate, and number of connected clients/slaves/evictions must be monitored to maintain Redis’s high throughput and low latency capabilities. These essential data points heavily influence both stability and efficiency within the system.

Scalegrid

MARCH 28, 2024

Redis Data Types and Structures The design of Redis’s data structures emphasizes versatility. Snapshots provide point-in-time captures of the dataset, which are efficient for recovery on startup. It is designed to cache plain text values, offering fast read and write access to frequently accessed data.

The Netflix TechBlog

JANUARY 10, 2024

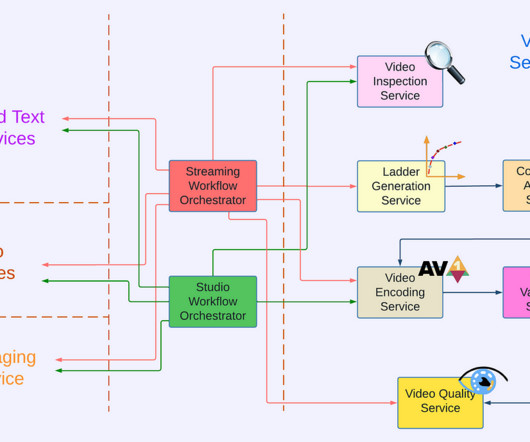

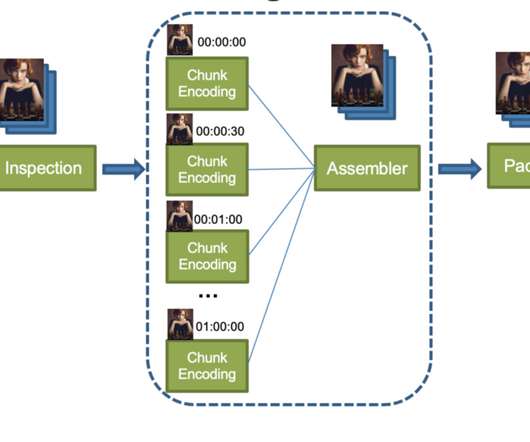

This architecture shift greatly reduced the processing latency and increased system resiliency. We expanded pipeline support to serve our studio/content-development use cases, which had different latency and resiliency requirements as compared to the traditional streaming use case. divide the input video into small chunks 2.

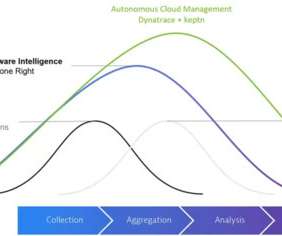

Dynatrace

SEPTEMBER 13, 2023

This is a set of best practices and guidelines that help you design and operate reliable, secure, efficient, cost-effective, and sustainable systems in the cloud. The framework comprises six pillars: Operational Excellence, Security, Reliability, Performance Efficiency, Cost Optimization, and Sustainability.

Dynatrace

JANUARY 31, 2024

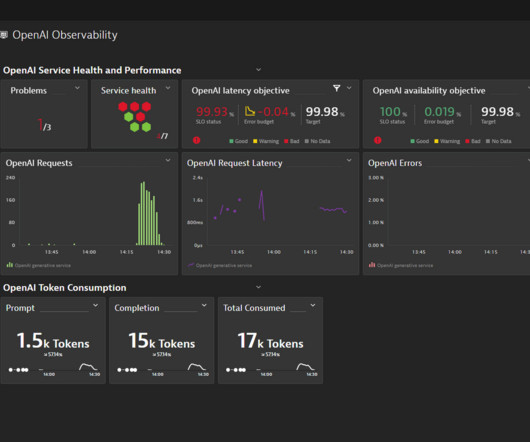

Model observability provides visibility into resource consumption and operation costs, aiding in optimization and ensuring the most efficient use of available resources. Observing AI models Running AI models at scale can be resource-intensive. However, organizations must consider which use cases will bring them the biggest ROI.

The Netflix TechBlog

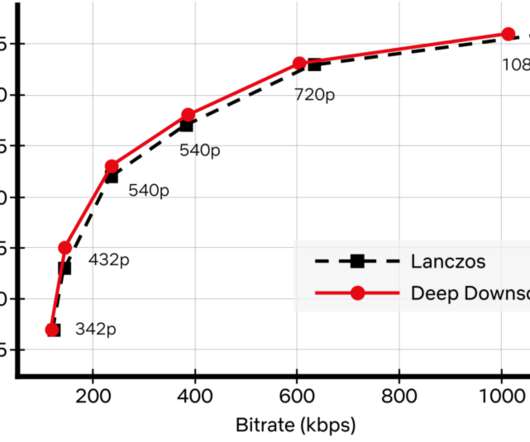

NOVEMBER 17, 2022

While conventional video codecs remain prevalent, NN-based video encoding tools are flourishing and closing the performance gap in terms of compression efficiency. We employed an adaptive network design that is applicable to the wide variety of resolutions we use for encoding. How do we apply neural networks at scale efficiently?

Dynatrace

JANUARY 17, 2024

As organizations turn to artificial intelligence for operational efficiency and product innovation in multicloud environments, they have to balance the benefits with skyrocketing costs associated with AI. The good news is AI-augmented applications can make organizations massively more productive and efficient. Use containerization.

The Netflix TechBlog

SEPTEMBER 24, 2021

Figure 1: A Simplified Video Processing Pipeline With this architecture, chunk encoding is very efficient and processed in distributed cloud computing instances. Uploading and downloading data always come with a penalty, namely latency.

Dynatrace

JUNE 7, 2023

A typical design pattern is the use of a semantic search over a domain-specific knowledge base, like internal documentation, to provide the required context in the prompt. With these latency, reliability, and cost measurements in place, your operations team can now define their own OpenAI dashboards and SLOs.

Scalegrid

JANUARY 8, 2024

This article delves into the specifics of how AI optimizes cloud efficiency, ensures scalability, and reinforces security, providing a glimpse at its transformative role without giving away extensive details. Using AI for Enhanced Cloud Operations The integration of AI in cloud computing is enhancing operational efficiency in several ways.

The Netflix TechBlog

MARCH 4, 2024

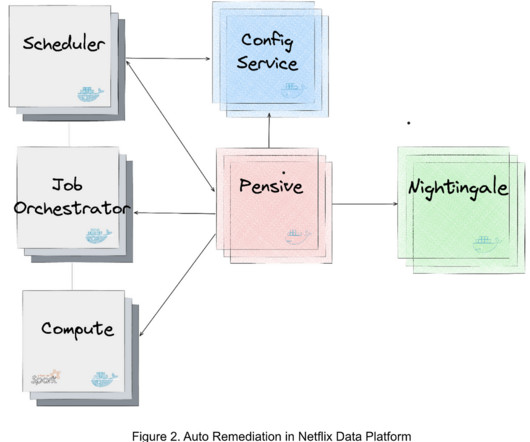

We have deployed Auto Remediation in production for handling memory configuration errors and unclassified errors of Spark jobs and observed its efficiency and effectiveness (e.g., For efficient error handling, Netflix developed an error classification service, called Pensive, which leverages a rule-based classifier for error classification.

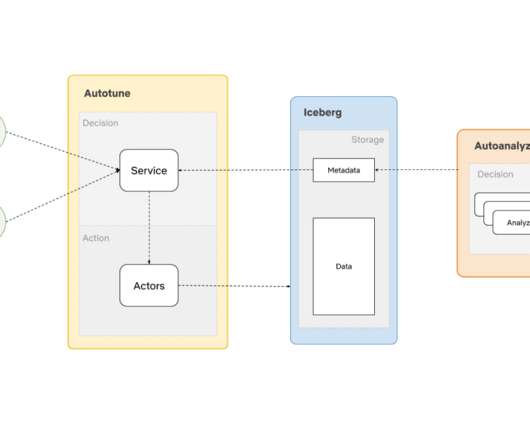

The Netflix TechBlog

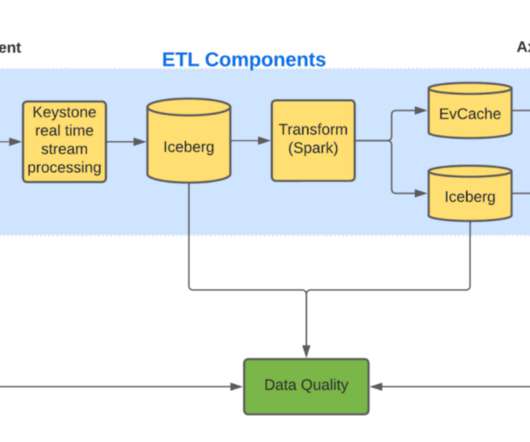

NOVEMBER 20, 2023

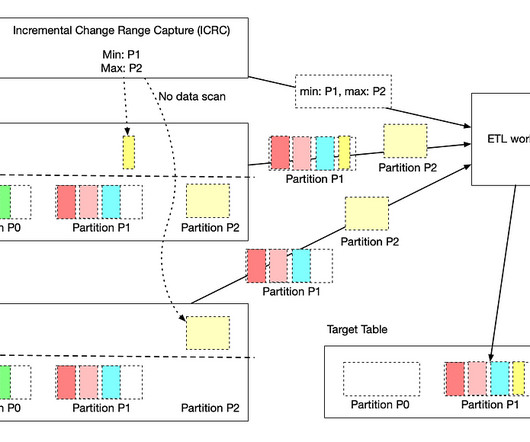

We will show how we are building a clean and efficient incremental processing solution (IPS) by using Netflix Maestro and Apache Iceberg. As our business scales globally, the demand for data is growing and the needs for scalable low latency incremental processing begin to emerge. past 3 hours or 10 days).

Scalegrid

FEBRUARY 8, 2024

Their design emphasizes increasing availability by spreading out files among different nodes or servers — this approach significantly reduces risks associated with losing or corrupting data due to node failure. Variations within these storage systems are called distributed file systems.

The Netflix TechBlog

DECEMBER 21, 2020

We built AutoOptimize to efficiently and transparently optimize the data and metadata storage layout while maximizing their cost and performance benefits. This article will list some of the use cases of AutoOptimize, discuss the design principles that help enhance efficiency, and present the high-level architecture.

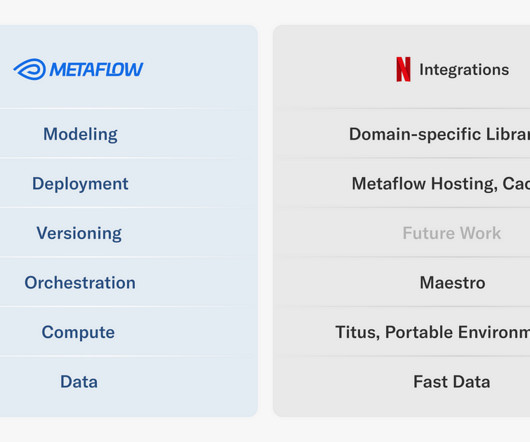

The Netflix TechBlog

MARCH 7, 2024

Since its inception , Metaflow has been designed to provide a human-friendly API for building data and ML (and today AI) applications and deploying them in our production infrastructure frictionlessly. In other cases, it is more convenient to share the results via a low-latency API.

Dynatrace

JULY 24, 2023

In the world of DevOps and SRE, DevOps automation answers the undeniable need for efficiency and scalability. This evolution in automation, referred to as answer-driven automation, empowers teams to address complex issues in real time, optimize workflows, and enhance overall operational efficiency.

Scalegrid

MARCH 22, 2024

They can also bolster uptime and limit latency issues or potential downtimes. Choosing the Right Cloud Services Choosing the right cloud services is crucial in developing an efficient multi cloud strategy. They simplify how you orchestrate everything across the diverse ecosystem of cloud services.

Percona

APRIL 17, 2023

The CFQ works well for many general use cases but lacks latency guarantees. The deadline excels at latency-sensitive use cases ( like databases ), and noop is closer to no schedule at all. On the other hand, MongoDB schema design takes a document-oriented approach. Two other schedulers are deadline and noop.

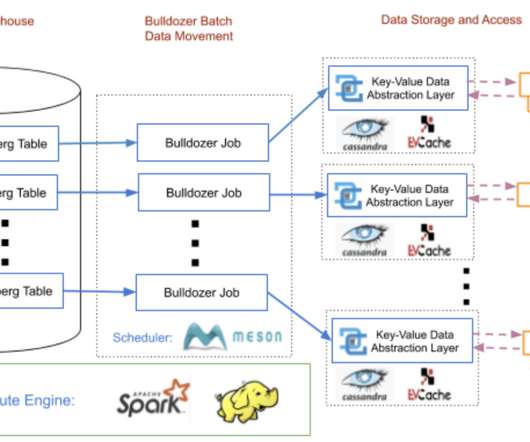

The Netflix TechBlog

OCTOBER 27, 2020

The data warehouse is not designed to serve point requests from microservices with low latency. Therefore, we must efficiently move data from the data warehouse to a global, low-latency and highly-reliable key-value store. As most key-value storage engines support efficiently deleting a namespace (e.g.

The Netflix TechBlog

APRIL 26, 2022

We will share how its design has evolved over the years and the lessons learned while building it. To understand Axion’s design, we need to know the various components that interact with it. The motivation has not changed since then; the design has. Design evolution Axion fact store has four components?—?fact

Scalegrid

JANUARY 12, 2024

This article analyzes cloud workloads, delving into their forms, functions, and how they influence the cost and efficiency of your cloud infrastructure. Simply put, it’s the set of computational tasks that cloud systems perform, such as hosting databases, enabling collaboration tools, or running compute-intensive algorithms.

Percona

MARCH 20, 2023

When designing an architecture, many components need to be considered before deciding on the best solution. In this scenario, it is also crucial to be efficient in resource utilization and scaling with frugality. Let us take a look also the latency: Here the situation starts to be a little bit more complicated.

Scalegrid

MARCH 14, 2024

In practice, a hybrid cloud operates by melding resources and services from multiple computing environments, which necessitates effective coordination, orchestration, and integration to work efficiently. Tailoring resource allocation efficiently ensures faster application performance in alignment with organizational demands.

Dynatrace

OCTOBER 4, 2022

While data lakes and data warehousing architectures are commonly used modes for storing and analyzing data, a data lakehouse is an efficient third way to store and analyze data that unifies the two architectures while preserving the benefits of both. Data lakehouses deliver the query response with minimal latency. Data warehouses.

IO River

NOVEMBER 2, 2023

A content delivery network (CDN) is a distributed network of servers strategically located across multiple geographical locations to deliver web content to end users more efficiently. The Four Pillars of CDN DesignCDN architecture can be broken down into several building blocks, known as the Four Pillars of CDN Design.

The Netflix TechBlog

MARCH 1, 2021

It supports both high throughput services that consume hundreds of thousands of CPUs at a time, and latency-sensitive workloads where humans are waiting for the results of a computation. The subsystems all communicate with each other asynchronously via Timestone, a high-scale, low-latency priority queuing system. Warm capacity.

The Netflix TechBlog

JUNE 13, 2023

By collecting and analyzing key performance metrics of the service over time, we can assess the impact of the new changes and determine if they meet the availability, latency, and performance requirements. One can perform this comparison live on the request path or offline based on the latency requirements of the particular use case.

ACM Sigarch

MAY 31, 2023

Heterogeneous and Composable Memory (HCM) offers a feasible solution for terabyte- or petabyte-scale systems, addressing the performance and efficiency demands of emerging big-data applications. even lowered the latency by introducing a multi-headed device that collapses switches and memory controllers. The recently announced CXL3.0

IO River

NOVEMBER 2, 2023

â€A content delivery network (CDN) is a distributed network of servers strategically located across multiple geographical locations to deliver web content to end users more efficiently. When an edge server goes down, end users in the affected region may experience an increase in latency for that specific location.

The Netflix TechBlog

OCTOBER 26, 2021

False negatives are closely related to the statistical concept of power , which gives the probability of a true positive given the experimental design and a true effect of a specific size. As a result, if the test treatment results in a small reduction in the latency metric, it’s hard to successfully identify?

Percona

MARCH 29, 2023

As the amount of data grows, the need for efficient data compression becomes increasingly important to save storage space, reduce I/O overhead, and improve query performance. Snappy compression is designed to be fast and efficient regarding memory usage, making it a good fit for MongoDB workloads.

The Netflix TechBlog

SEPTEMBER 8, 2020

For each route we migrated, we wanted to make sure we were not introducing any regressions: either in the form of missing (or worse, wrong) data, or by increasing the latency of each endpoint. Being able to canary a new route let us verify latency and error rates were within acceptable limits. This meant that data that was static (e.g.

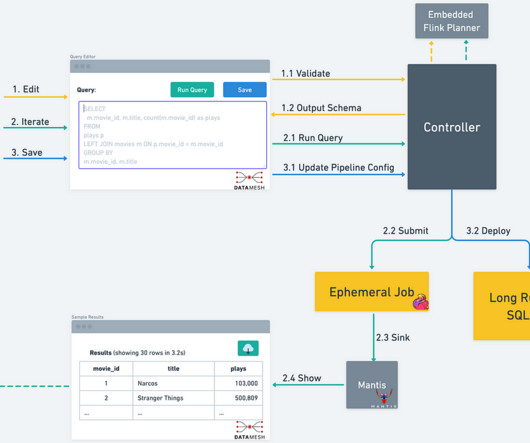

The Netflix TechBlog

NOVEMBER 3, 2023

However, this design decision led to a different set of challenges. Additionally, instead of implementing business logic by composing multiple individual Processors together, users could express their logic in a single SQL query, avoiding the additional resource and latency overhead that came from multiple Flink jobs and Kafka topics.

Dynatrace

APRIL 5, 2021

The 2014 launch of AWS Lambda marked a milestone in how organizations use cloud services to deliver their applications more efficiently, by running functions at the edge of the cloud without the cost and operational overhead of on-premises servers. AWS continues to improve how it handles latency issues. Dynatrace news.

The Morning Paper

NOVEMBER 10, 2019

It’s been clear for a while that software designed explicitly for the data center environment will increasingly want/need to make different design trade-offs to e.g. general-purpose systems software that you might install on your own machines. The desire for CPU efficiency and lower latencies is easy to understand.

The Netflix TechBlog

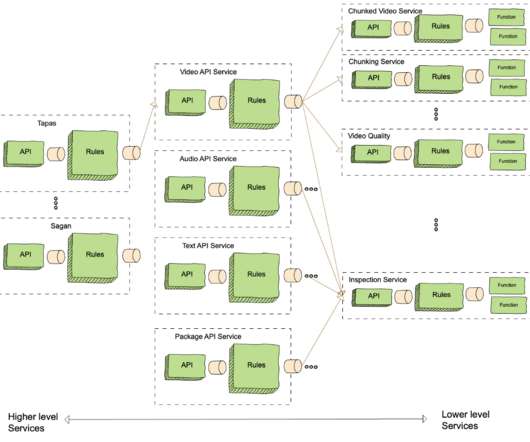

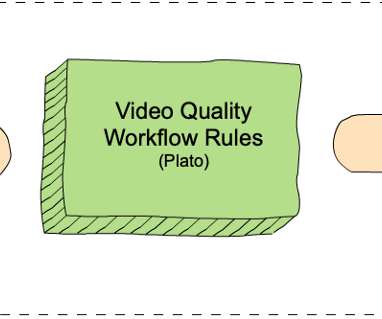

NOVEMBER 2, 2021

For example, when we design a new version of VMAF, we need to effectively roll it out throughout the entire Netflix catalog of movies and TV shows. This article explains how we designed microservices and workflows on top of the Cosmos platform to bolster such video quality innovations. VQS is called using the measureQuality endpoint.

Percona

DECEMBER 11, 2023

A Dedicated Log Volume (DLV) is a specialized storage volume designed to house database transaction logs separately from the volume containing the database tables. This separation aims to streamline transaction write logging, improving efficiency and consistency.

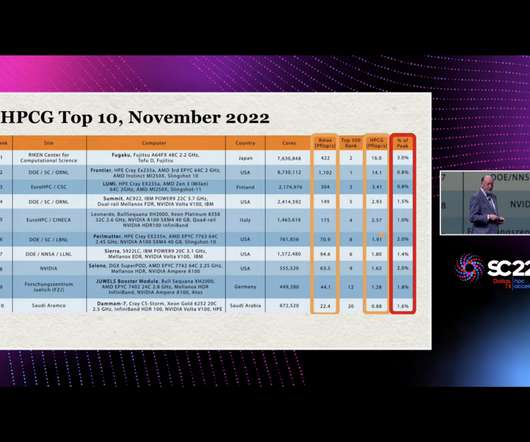

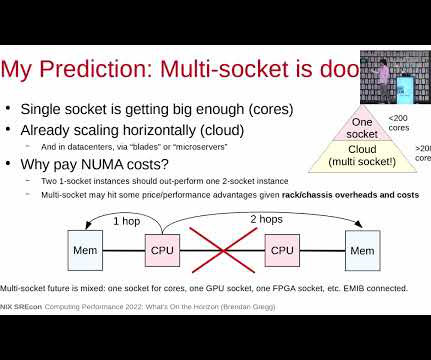

Adrian Cockcroft

JANUARY 20, 2023

Here’s some predictions I’m making: Jack Dongarra’s efforts to highlight the low efficiency of the HPCG benchmark as an issue will influence the next generation of supercomputer architectures to optimize for sparse matrix computations. Jack Dongarra talked about the scores, and pointed out the low efficiency on some important workloads.

Dynatrace

DECEMBER 15, 2022

ITOps refers to the process of acquiring, designing, deploying, configuring, and maintaining equipment and services that support an organization’s desired business outcomes. This includes response time, accuracy, speed, throughput, uptime, CPU utilization, and latency. Performance. What does IT operations do?

Brendan Gregg

FEBRUARY 28, 2023

My personal opinion is that I don't see a widespread need for more capacity given horizontal scaling and servers that can already exceed 1 Tbyte of DRAM; bandwidth is also helpful, but I'd be concerned about the increased latency for adding a hop to more memory. Ford, et al., “TCP

Expert insights. Personalized for you.

We have resent the email to

Are you sure you want to cancel your subscriptions?

Let's personalize your content