Speed Up Presto at Uber with Alluxio Local Cache

Uber Engineering

JANUARY 16, 2023

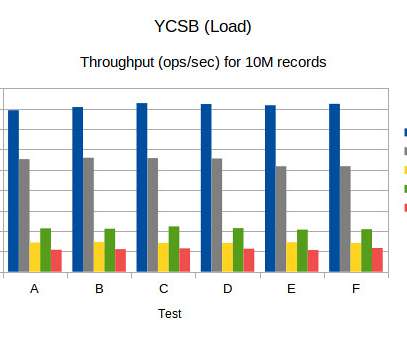

Uber’s interactive analytics team shares how they integrated Alluxio’s data caching into Presto, the SQL query engine powering thousands of daily active users on petabyte scale at Uber, to dramatically reduce data scan latencies through leveraging Presto on local disks.

Let's personalize your content