How to use Server Timing to get backend transparency from your CDN

Speed Curve

FEBRUARY 5, 2024

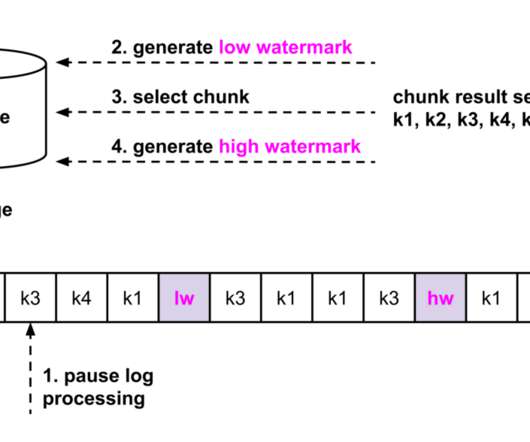

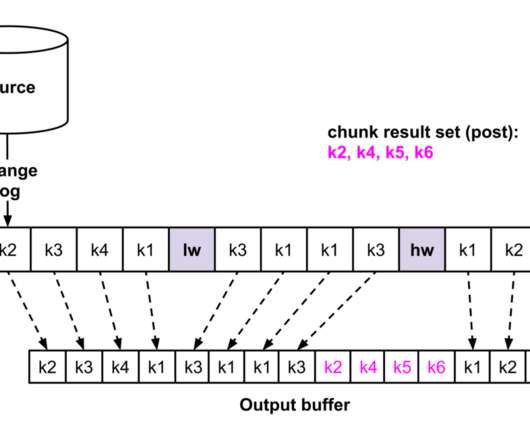

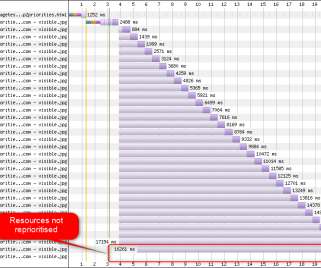

Server-timing headers are a key tool in understanding what's happening within that black box of Time to First Byte (TTFB). Cue server-timing headers Historically, when looking at page speed, we've had the tendency to ignore TTFB when trying to optimize the user experience. I mean, why wouldn't we?

Let's personalize your content